Jupiter’s Hot Youth May Have Melted Its Icy Moons

As a newborn planet, Jupiter glowed brightly in the sky and outshined today’s sun from the perspective of the gas giant’s largest moons. That early radiance—and upcoming visits by multiple spacecraft—may help to solve a 40-year-old mystery about the makeup of those satellites. For decades scientists have struggled to understand the strange density differences in Jupiter’s four Galilean moons—which, in order from closest to the planet to farthest from it, are Io, Europa, Ganymede and Callisto. Although these natural satellites should have formed from the same feedstock of material and thus have similar compositions, density measurements suggest that Callisto andSupreme Court Preserves Access to Abortion Pill Pending Appeal

Posted on Author Admin Comments Off on Supreme Court Preserves Access to Abortion Pill Pending Appeal

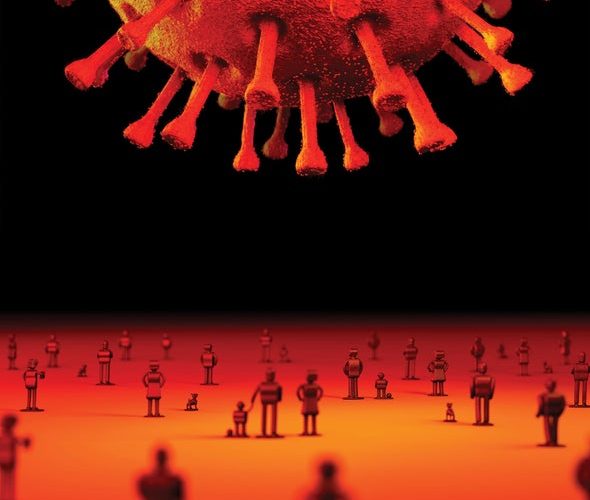

The U.S. Supreme Court on Friday halted a Texas district judge’s ruling that would have restricted access to mifepristone, one of two drugs used together in medication abortion. The halt will last until the Fifth Circuit Court of Appeals can hear an appeal in May. The outcome of that case could have major implications for abortion access in this country and—more broadly—for drug development and the pharmaceutical industry.

The justices’ decision to halt the lower court’s ruling “is a signal that they understand the implications of this decision and its impact not only for reproductive health but for the pharmaceutical Telehealth Is Proving to be a Boon to Cancer and Diabetes Care

Posted on Author Admin Comments Off on Telehealth Is Proving to be a Boon to Cancer and Diabetes Care

Like many people, I was introduced to telehealth during the pandemic. I met with my psychiatrist virtually, settling onto my couch instead of hers for our sessions. But those appointments required only a conversation. It made sense that psychotherapy easily made a switch to the online world.

What's more surprising is how often telehealth now is being used in other medical areas, such as in cancer care. Although chemotherapy and immunotherapy are typically done in person, follow-up visits and medication and symptom management can be done virtually, says Leah Rosengaus, director of digital health at Stanford Health Care in California, Cool Transportation Hacks Cities Are Using to Fight Climate Change

Posted on Author Admin Comments Off on Cool Transportation Hacks Cities Are Using to Fight Climate Change

More than 270 million registered cars, trucks and buses currently drive along on U.S. roads, or about one for every U.S. resident aged 15 or older. Transportation is the leading source of greenhouse gas emissions in the country—and accounts for 15 percent of emissions globally. So transforming the way we get around is a crucial part of the effort to tackle the climate crisis.

People and governments have recently been taking some encouragingly concrete steps in this direction with projects related to transit and city planning. They provide a basic blueprint for bringing down emissions and other vehicle-created pollution. Here Leopards Are Living among People. And That Could Save the Species

Posted on Author Admin Comments Off on Leopards Are Living among People. And That Could Save the Species

Where the wild things are is a shifting concept influenced by culture, upbringing, environs, what we watch on our screens, and, for me, the tussle between my education as a wildlife biologist and my experiences in the field. Taking to heart a core tenet of conservation science—that wild animals, certainly large carnivores, belong in the wilderness—I began my career in the 1990s by visiting nature reserves in India to study Asiatic lions and clouded leopards. When in the new millennium I stumbled on leopards living in and around villages, I was shocked. “They shouldn't be here!” my training shouted. AI Chatbots and the Humans Who Love Them

Posted on Author Admin

Sophie Bushwick: Welcome to Tech, Quickly, the part of Science, Quickly where it’s all tech all the time. I’m Sophie Bushwick, tech editor at Scientific American. [Clip: Show theme music] Bushwick: Today, we have two very special guests. Diego Senior: I’m Diego Senior. I am an independent producer and journalist. Anna Oakes: I’m Anna Oakes. […]

Jupiter’s Hot Youth May Have Melted Its Icy Moons

Posted on Author Admin

As a newborn planet, Jupiter glowed brightly in the sky and outshined today’s sun from the perspective of the gas giant’s largest moons. That early radiance—and upcoming visits by multiple spacecraft—may help to solve a 40-year-old mystery about the makeup of those satellites. For decades scientists have struggled to understand the strange density differences in […]